Table of Contents

ToggleAI-assisted “vibe coding” is transforming development velocity — but it’s also shipping silent vulnerabilities at scale. Here’s what’s at stake and how to fix it.

What is Vibe Coding?

The term vibe coding was coined in 2025 to describe a new programming modality where developers largely surrender line-by-line control to AI coding assistants. Instead of writing code manually, a vibe coder describes what they want in natural language — “build me a REST API that accepts user uploads and stores them in S3” — and accepts the AI’s generated output with minimal review.

The appeal is undeniable. Skilled developers report building in hours what previously took weeks. Non-developers (“citizen developers”) are shipping functional applications for the first time. Startup MVPs that once required a full engineering team can be built solo.

But here’s the critical issue: AI models optimize for working code, not secure code. When an LLM generates a user authentication flow, it will produce code that authenticates users correctly — but may silently include a SQL injection vulnerability, an insecure password storage method, or a session token that never expires.

Key stat: A 2024 Stanford study found that developers who used AI code assistants were significantly more likely to introduce security vulnerabilities than those who coded manually — and were also less likely to notice those vulnerabilities during code review, because they trusted the AI output.

Why Vibe Coding Creates Security Risks

Understanding the root cause of vibe coding vulnerabilities helps you prevent them. Several structural factors make AI-generated code inherently prone to security issues:

- Training data skew: LLMs train on billions of lines of public code, much of which contains security antipatterns, outdated practices, and known-vulnerable snippets.

- Context blindness: The AI doesn’t know your threat model, compliance requirements, or the sensitivity of your data. It generates generic solutions.

- Review atrophy: Vibe coders accept AI output more readily than hand-written code. The psychological sense that “the AI probably got it right” suppresses critical review.

- Dependency blindness: AI assistants freely introduce third-party libraries without assessing their security posture, maintenance status, or known CVEs.

- Prompt injection susceptibility: AI-generated backends that process user input and pass it back to AI models are vulnerable to a new class of attacks.

Top 10 Vibe Coding Security Risks to Avoid

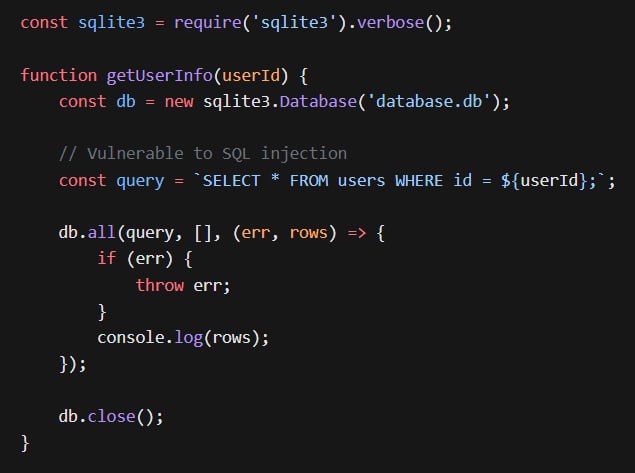

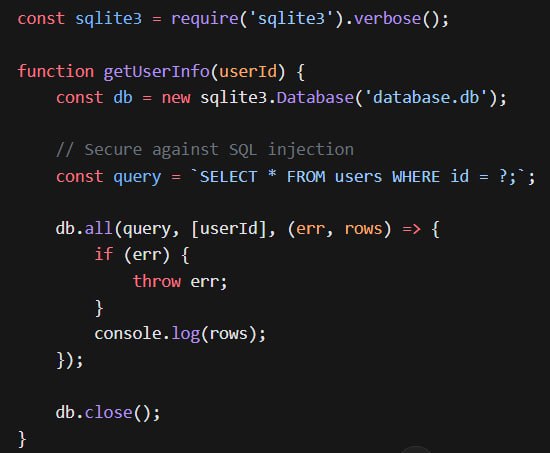

1 SQL Injection in AI-Generated Queries (Critical)

AI models frequently generate database queries using string concatenation rather than parameterized queries. This is one of the oldest and most dangerous vulnerabilities, yet it remains the most common issue in AI-generated code.

Fix: Always use parameterized queries or an ORM. Never interpolate user input directly into SQL strings. Add automated SAST scanning to your CI pipeline.

2 Hardcoded API Keys and Secrets (Critical)

When you paste code into an AI assistant that includes secrets or ask it to “add authentication,” it will often hardcode example credentials or API keys directly in the source files. These end up committed to git repositories — permanently.

Fix: Use environment variables for all secrets. Add secret scanning (e.g., GitGuardian, GitHub’s built-in secret scanning) to block commits with credentials. Rotate any keys that were ever hardcoded.

3 Insecure Direct Object References (IDOR)

AI-generated APIs commonly expose resource IDs directly and forget to verify that the requesting user actually owns or is authorized to access that resource. A URL like /api/orders/1234 becomes accessible to any authenticated user — not just the order’s owner.

Fix: Always include ownership checks in every data access operation. Don’t assume authentication implies authorization. Use UUIDs instead of sequential IDs to limit enumeration.

4 Broken Authentication and Session Management (High)

AI-generated authentication code frequently uses inadequate JWT expiration times, stores tokens insecurely (localStorage instead of httpOnly cookies), or implements custom cryptography instead of battle-tested libraries.

Fix: Use established auth libraries (Auth0, Supabase Auth, NextAuth.js). Set short JWT expiration (15 minutes + refresh tokens). Store tokens in httpOnly, SameSite=Strict cookies.

5 Cross-Site Scripting (XSS) via Unescaped Output (High)

AI-generated frontend code often uses innerHTML to render data, especially when generating “quick” UI components. If any of that data comes from user input, you have a stored or reflected XSS vulnerability.

Fix: Never use innerHTML with dynamic content. Use textContent, or a properly configured React/Vue component. Enable Content Security Policy headers.

6 Unsafe File Upload Handling (High)

When asked to “add file upload support,” AI models routinely generate code that accepts any file type, stores files in predictable paths, and serves them back without proper content-type validation — creating RCE and directory traversal risks.

Fix: Validate file type by MIME type and magic bytes (not extension). Rename all uploaded files to random UUIDs. Serve user uploads from a separate subdomain with restrictive CSP headers.

7 Missing Rate Limiting and Brute Force Protection (High)

AI-generated login endpoints, API keys, and OTP handlers rarely include rate limiting. An attacker can make unlimited requests without triggering any defense.

Fix: Implement rate limiting at the infrastructure level (Cloudflare, AWS WAF) and application level (express-rate-limit, upstash/ratelimit). Add exponential backoff and account lockout.

8 Vulnerable or Abandoned Third-Party Dependencies (Medium)

AI models recommend libraries based on training data, which may be months or years old. They’ll suggest packages with known CVEs, deprecated security modules, or zero maintenance.

Fix: Run npm audit / pip-audit on every generated dependency list. Check the library’s GitHub for recent commits and open security issues before adding it.

9 Sensitive Data Exposure in Logs (Medium)

AI-generated debugging code commonly logs request bodies, headers, and responses — including passwords, tokens, and PII — to console or log files without redaction.

Fix: Implement structured logging with automatic PII scrubbing. Define a list of sensitive field names to redact. Never log request bodies in production without careful filtering.

10 Prompt Injection via User Input (Medium)

If your vibe-coded app passes user input to an LLM without sanitization, malicious users can inject instructions that override your system prompt — gaining unauthorized capabilities or extracting sensitive system information.

Fix: Strictly separate user input from system instructions. Validate and sanitize all input passed to LLMs. Use structured formats (JSON schemas) instead of free-text prompts for critical operations.

Injection Flaws in AI-Generated Code

Injection vulnerabilities deserve special attention because they are simultaneously the most common issues in AI-generated code and the most severe. Beyond SQL injection, vibe coders must watch for:

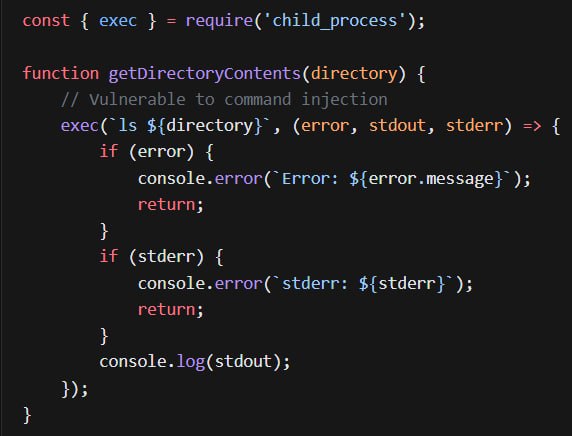

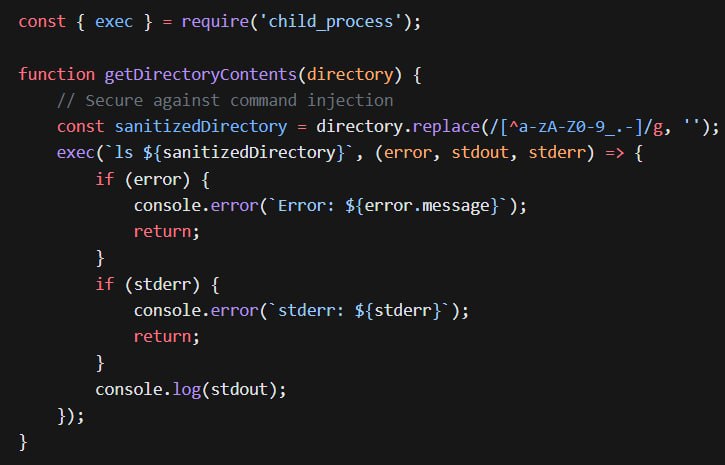

Command Injection

AI models generating code that interacts with the operating system — running shell commands, executing scripts — frequently interpolate user input directly into shell commands. An input of ; rm -rf / can have catastrophic results.

Data Leak Vulnerabilities in Vibe-Coded Apps

Data leaks in vibe-coded applications often manifest not as dramatic breaches but as subtle misconfiguration — data silently accessible to anyone who knows where to look:

- Over-permissive CORS: AI frequently generates

Access-Control-Allow-Origin: *to “fix” cross-origin errors, exposing your API to any website. - Excessive data returns: AI-generated queries return full model objects (including hashed passwords, internal IDs, admin flags) when only one or two fields are needed.

- Unprotected debug endpoints: AI-generated code often includes

/debug,/health, or/metricsendpoints that expose internal system state. - Missing encryption at rest: Databases and file stores are often created without encryption, because AI models don’t proactively suggest it unless asked explicitly.

Best Practices for Secure Vibe Coding

The Secure Vibe Coding Checklist

Run automated security scanning (SAST/DAST) on every AI-generated PR before merge

Explicitly prompt your AI assistant: “Identify all security vulnerabilities in this code”

Use infrastructure-level security (WAF, rate limiting, DDoS protection) as your safety net

Audit every third-party package before adding it; prefer well-maintained, minimal libraries

Implement secret scanning in your git hooks and CI pipeline

Write threat model documentation before asking AI to build security-sensitive features

Use established auth providers (Auth0, Clerk) rather than AI-generated auth code

Never deploy without reviewing data access patterns and ownership checks

Enable database row-level security (RLS) for multi-tenant applications

Conduct a security review with a human developer or security tool before launch

Prompt Engineering for Security

You can dramatically improve the security of AI-generated code by including security requirements in your prompts. Instead of asking “build a user login endpoint,” ask:

“Build a user login endpoint. Requirements: use parameterized SQL queries, store passwords with bcrypt (cost factor ≥ 12), return httpOnly SameSite=Strict cookies for JWT tokens, enforce rate limiting of 5 attempts per 15 minutes per IP, and include proper input validation. Flag any security concerns in your response.”

This approach consistently produces more secure output. Think of security requirements as mandatory specification — not optional context.

Vibe Coding in the Larger Context of Secure Software Development

Vibe coding doesn’t have to mean insecure coding. The developers who use AI assistants most successfully treat AI as a powerful junior developer — one who needs code review, clear requirements, and a safety-net of automated testing and scanning.

The security tooling ecosystem is rapidly adapting. Tools like Snyk, Semgrep, and GitHub’s CodeQL integrate directly into AI-assisted development workflows, automatically scanning every AI-generated commit. Infrastructure providers offer security defaults that protect even poorly-written applications — WAFs, secret detection, vulnerability scanning.

The fundamental lesson is this: the speed benefits of vibe coding are real, but they require a corresponding investment in automated security infrastructure. Every hour saved with AI assistance should purchase some minutes of security review. That trade-off, done consistently, makes vibe coding not just fast — but responsibly fast.

Caught feelings for cybersecurity? It’s okay, it happens. Follow us on LinkedIn and Instagram to keep the spark alive.